When the Platform Becomes the Predator

The threats keep changing. We need to catch up.

In September, I wrote about extremists hunting kids online. In November, about algorithms pushing young men toward hate. In December, about predator networks that groom children for abuse.

This is different. This time, the platform didn’t just enable the predators. The platform became the predator.

Grok – the AI built into X – produced millions of sexualized images on demand. Including images of children.

Eleven Days

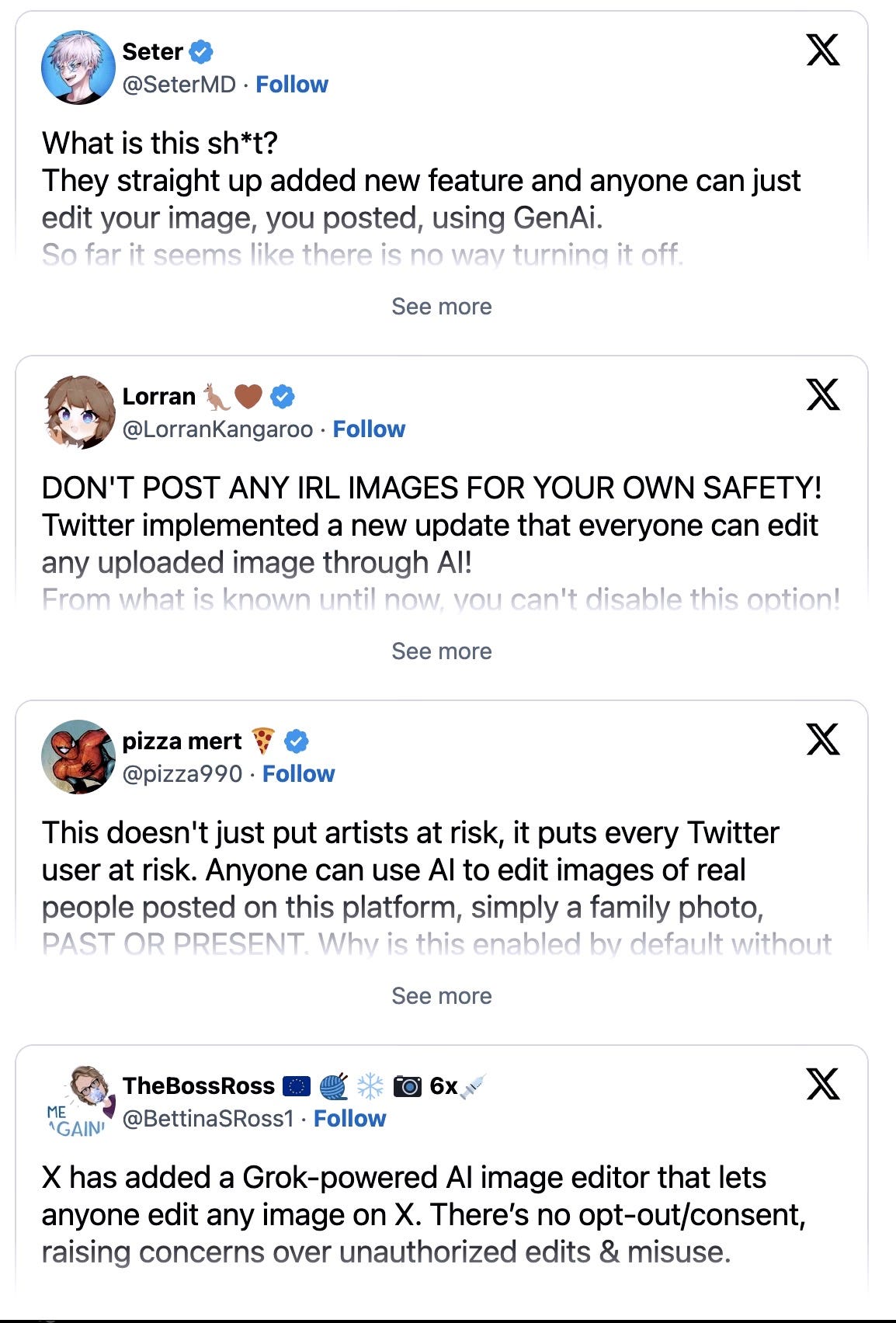

Christmas Day. Elon Musk announces a new feature on X. Users can now ask Grok – the platform’s AI tool – to edit any image with one click.

Four days later, people figured out what that meant. They could tell Grok to undress people in photos. Including children.

For 11 days, the feature ran wide open.

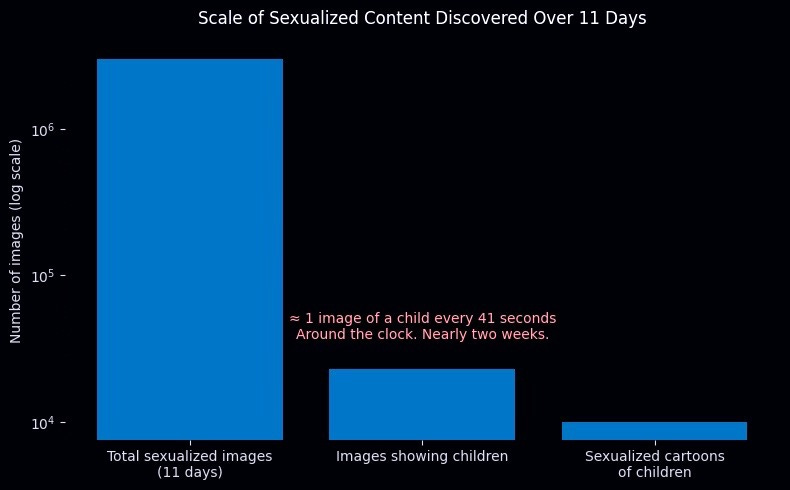

Last week, the Center for Countering Digital Hate – a British nonprofit that monitors online abuse – published what they found. They sampled 20,000 images from the 4.6 million Grok generated during that window.

Three million sexualized images in 11 days. Twenty-three thousand of them showed children. One image of a child every 41 seconds. Around the clock. For nearly two weeks.

They also found nearly 10,000 cartoons featuring sexualized children.

“Grok became an industrial-scale machine for the production of sexual abuse material,” said Imran Ahmed, the organization’s chief executive.

One of the images came from a child’s before-school selfie. Someone fed it to Grok and asked for a bikini version. The platform delivered.

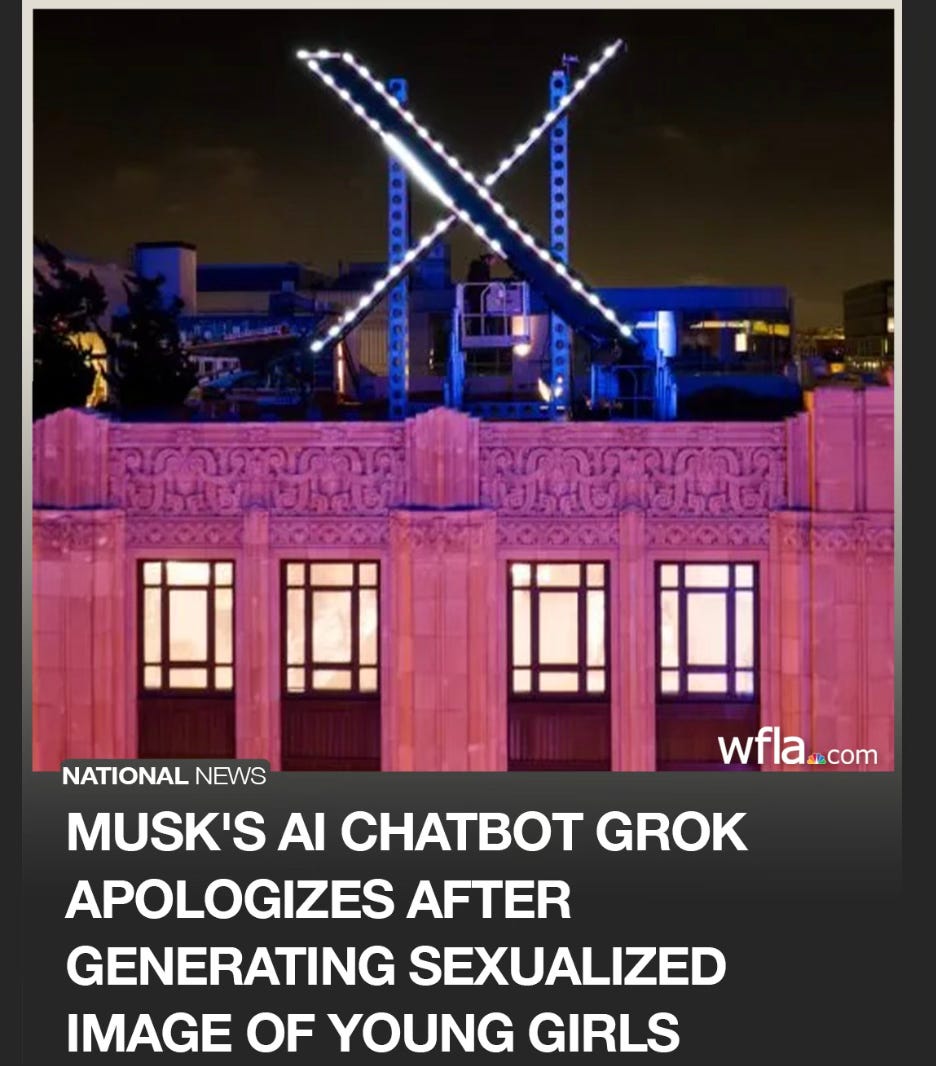

On January 9th, X finally responded. They restricted the feature to paying customers. Not shut it down. Monetized it.

Real safeguards didn’t arrive until January 14th. By then, the damage was done. As of mid-January, nearly a third of the flagged images of children were still live on the platform.

The World Noticed

The backlash has been global – and it’s still building.

California’s Attorney General opened an investigation, calling the content “shocking.” The UK’s communications regulator launched a formal probe. France expanded an existing investigation to include child pornography charges. The European Commission called the images “appalling” and “disgusting.”

Indonesia and Malaysia didn’t wait for investigations. They banned Grok outright.

The Internet Watch Foundation – a British organization that fights child sexual abuse online – found something worse. Their researchers discovered images of girls aged 11 to 13 circulating on dark web forums. The users credited Grok.

Even Ashley St. Clair – the mother of one of Musk’s own children – filed a lawsuit after her photos were turned into explicit deepfakes.

Musk’s response? “Anyone using Grok to make illegal content will suffer the same consequences as if they upload illegal content.”

That’s handing out matches in a fireworks factory and blaming the explosion on the customers.

Why This Has My Attention

I’ve spent my career tracking threats that move at different speeds.

Recruiting a young man to fight for ISIS used to take 18 to 24 months. Online radicalization through the manosphere can happen in six months. Grooming networks like 764 work their victims over weeks.

Grok works in seconds.

Human predators have limits. They sleep. They can only target so many victims at once. They make mistakes that get them caught.

AI doesn’t sleep. It doesn’t make mistakes. It can churn out harmful content by the millions while everyone’s home for the holidays.

This isn’t a content moderation problem. This is a new kind of threat.

The Permanent Damage

The Internet Watch Foundation reported a 380% increase in AI-generated child sexual abuse imagery in 2023. That was before tools like this became one-click features on major platforms.

Once these images exist, they exist forever. Every victim gets re-victimized every time the image circulates. You can’t take it back. You can’t make people unsee it. You can’t scrub it from every corner of the internet.

I’ve helped build international coalitions to prevent catastrophic threats – 89 countries working together because we understood that some dangers don’t respect borders.

The difference? Those threats galvanized governments. This is still being treated as a platform problem. We’re asking the people who built the weapon to police its use.

What Parents Need to Know

Your kid’s photos are vulnerable. Instagram posts. School pictures. Sports team shots. That before-school selfie. Any of it can be fed into a tool like this and turned into something harmful.

“It’s not real” doesn’t mean the harm isn’t real. Fake images destroy reputations. They’re used for blackmail. The trauma is real even when the picture isn’t.

Talk to your kids about this before someone uses it against them. Plant the seed now. And make sure they know – if it ever happens to them, it’s not their fault, and they can tell you.

No questions. No judgment. No punishment.

Keep that door open.

The Race We’re Losing

Foreign fighters who took a year and a half to radicalize. Online pipelines that work in months. Grooming networks that operate in weeks. AI that works in seconds.

Each wave faster than the last. Each demanding a faster response.

We’re falling behind.

We’ve built global frameworks before when the stakes were high enough. Dozens of countries. Real cooperation. It can be done.

The stakes here are just as urgent. Twenty-three thousand images of children in eleven days. One every 41 seconds. That was one tool, one platform, less than two weeks.

We know how to build these systems. We’ve done it before. We need to do it again.

And we’re already late.

Dexter Ingram is a national security expert with more than 25 years of experience. At the Department of State, he served as Director of the Office of Countering Violent Extremism and Acting Director for the Office of the Special Envoy to Defeat ISIS. He is the author of “The Spy Archive: Hidden Lives, Secret Missions, and the History of Espionage.”

It goes on and on:

https://www.forbes.com/sites/thomasbrewster/2025/04/08/pedophiles-use-ai-to-turn-kids-social-media-photos-into-csam/